I've gotten a few questions lately about the CCD and C-CDA Payers Section. This is an vastly underutilized section in most implementations, but could have great value in Patient to Provider communication, as it could eliminate the most annoying, costly (to get wrong), and possibly error-prone part of the registration process.

You know that part where they ask for your insurance card.

Below are some samples of insurance cards.

There are at least four and sometimes more identifiers on these cards, and they all identify different things. They are usually printed in fairly small type, with 8pt type being fairly common for the less important identifiers, and 6pt type on the back being fairly common for the list of phone numbers you need to call for different things. There's often no bar code or magnetic stripe (I guess it's not in the payers best interest for these cards to be usable).

There are identifiers for the policy, which is often labeled "Group Number". This represents the identifier for the policy covering the healthcare activity. Then there's an identifier for the policy holder, usually called the subscriber identifier. But that gets confusing because some plans also call that the policy-holder's member number.

Then there's the member id (or member number). That identifies the family member being covered, and is usually the subscriber-id followed by a sequence number. Some plans use -1 for the subscriber, -2 for the spouse, and then children as -3 to -n in birth order. Others alphabetize by first name (as mine did). That makes my eldest daughter -2, my wife -3, and my youngest daughter -4.

Following that, there could be one or more plan identifiers. These are the identifiers for the specific kind of insurance plan that you have. They represent a particular kind of insurance plan, and from what I can tell, are managed by state insurance regulators. It's not uncommon to have a separate plan covering your regular care (e.g., ambulatory), and a separate plan for hospitalization.

OK, got that? Now, what about the payer ID? There isn't one yet, but I expect after the regulations pass and every payer has an identifier, you'll find that there as well.

Some cards also double as Pharmacy Benefit Management cards. Those will have two additional numbers on them, called RxBIN and RxGRP, which are routing numbers used to identify how to communicate with the PBM (effectively identifiers for the PBM).

When we built CCD, we started from a coverage model developed by the HL7 Financial Management workgroup. That model appears below:

Within CCD and C-CDA, there are three templates defined:

The Coverage Activity template represents the list of policies (or programs like CHIP, Medicaid, et cetera) that provides coverage to the patient. It contains one or more policy or program activities which describe one component of the coverage. These can be sequenced (really prioritized) by the sequenceNumber field in the act relationship.

The policy activity has an identifier. This is the "policy number" or "group number" associated with the policy or program covering the patient.

The plan act defines the policy and is where you'd put the plan identifier.

The covered party is the patient. The identifier associated with them is where you put the member id.

The responsible party is the "policy holder", and is where you would put the subscriber id.

Finally, the Payer is who actually makes the payment, and is where you'd put the PBM routing numbers, or payer ids when those become available.

The HITSP C83 specification goes into great detail on this section of the CCD, explaining where the content goes. At the end of this post, you can find a full example that has been pieced together from various examples in that document.

The C-CDA follows a similar pattern. You should be able to cross-walk the templates yourself using the template map in the appendix of C-CDA.

-- Keith

<act classCode='ACT' moodCode='DEF'>

<templateId root='2.16.840.1.113883.10.20.1.20'/>

<templateId root='1.3.6.1.4.1.19376.1.5.3.1.4.17'/>

<id root=''/>

<code code='48768-6' displayName='Payment Sources'

codeSystem='2.16.840.1.113883.6.1' codeSystemName='LOINC'/>

<statusCode code='completed'/>

<!-- Example 1, A health plan -->

<entryRelationship typeCode='COMP'>

<sequenceNumber value='1'/>

<act classCode='ACT' moodCode='EVN'>

<templateId root='2.16.840.1.113883.10.20.1.26'/>

<templateId root='2.16.840.1.113883.3.88.11.83.5'/>

<id root='2844AF96-37D5-42a8-9FE3-3995C110B4F8'

extension='GroupOrContract#'/>

<code code='IP' displayName='Individual Policy'

codeSystem='2.16.840.1.113883.6.255.1336'

codeSystemName='X12N-1336'/>

<statusCode code='completed'/>

<performer typeCode='PRF'>

<!-- This examples assume an RxBIN of 699999 and an RxPCN of PZZZZ -->

<assignedEntity classCode='ASSIGNED'>

<id root='2.16.840.1.113883.3.88.3.1' extension='699999'/>

<id root='2.16.840.1.113883.3.88.3.1.699999' extension='PZZZZ'/>

<addr>…</addr>

<telecom value='…'/>

<representedOrganization classCode='ORG'>

<name>…</name>

</representedOrganization>

</assignedEntity>

</performer>

<!-- Example 2, The patient is a dependent of the subscriber -->

<participant typeCode='COV'>

<time>

<low value='20070209'/>

</time>

<participantRole classCode='PAT'>

<id root='…' extension='MEMBERID#'/>

<code code='DEPEND' displayName='dependent'

codeSystem='2.16.840.1.113883.5.111' codeSystemName='RoleCode'/>

<playingEntity>

<name><given>Baby</given><family>Ross</family></name>

<sdtc:birthTime value='20070209'/>

</playingEntity>

</participant>

<participant typeCode='HLD'>

<participantRole classCode='IND'>

<id root='…' extension='SUBSCRIBERID#'/>

<playingEntity>

<name><given>Meg</given><family>Ellen</family></name>

<sdtc:birthTime value='19600127'/>

</playingEntity>

</participant>

<entryRelationship typeCode='REFR'>

<act classCode='ACT' moodCode='DEF'>

<id root='2844AF96-37D5-42a8-9FE3-3995C110B4FA' extension='PlanID'/>

<code code='HMO' displayName='health maintenance organization policy'

codeSystem='2.16.840.1.113883.5.4' codeSystemName='ActCode'/>

<text>Health Plan Name</text>

</act>

</entryRelationship>

</act>

</entryRelationship>

</act>

Wednesday, October 31, 2012

What Scares Me

Happy Halloween or Blessed Samhain, whichever you prefer. In light of the holiday (or perhaps in dark of it), I thought I'd make a list of the things that keep me awake at night:

- The increasing pace of technology advancement in Healthcare IT. What scares me here is that there are lot of very interesting things going on, too many in fact to keep track of them all. There are two dangers:

- I'll miss something important that I should be paying attention to, and as a result, something might be selected that either isn't the best way to solve a problem, or doesn't fit well with other efforts.

- More scary than the first, is that something will get rushed through that is broken, and as a result, harm occurs to people. I don't know which would be worse, a case where I identified the issue and it got pushed through anyway, or a case where I didn't find the issue.

- My provider (or my kid's provider) won't get a portal this year or next. There's a slim chance of that for my provider, perhaps a slightly larger chance with regard to my kids' pediatrician, but it does concern me. There's precious little I can do about this other than to keep hammering my providers (which I do at every opportunity).

- Nobody will adopt standards that I want to work on, like Query Health, HQMF, ABBI. Given that Query Health is in pilots, HQMF v1 is being used to delivery Meaningful Use measures, and HQMF v2 is easier to use, and ABBI PULL seems to have interest, it's just a matter of ensuring that I can put the time in on these to bring them to successful completion.

- C-CDA Harmonization with the IHE PCC TF will be more difficult than I thought. That's pretty likely, but I've already accounted for it in my plans for next year. It's going to require more attention to code and tools rather than manual efforts, which means that I'll probably be pulling a bigger load on that than I would like with respect to execution. The key to managing this one is delegating more non-coding efforts to others.

Tuesday, October 30, 2012

Automating the Transition Towards CCDA in IHE PCC

One of the discussions in the IHE PCC Planning meeting this week (being held mostly virtually due to travel challenges created by hurricane Sandy) is about how to converge towards C-CDA instead of basing the profiles on CCD.

There are two challenges:

My hope is to use MDHT to capture the existing PCC Technical Framework rules (actually, to verify the transcriptions already available here), compare the rules to C-CDA, and apply some logic within Eclipse across the models to create new templates that move us towards C-CDA. When the results of the logic produce templates that are identical, we'd just adopt the C-CDA templates. When they didn't, we'd create new versions of IHE templates that adopted as many of the C-CDA constraints as possible.

With luck, I'd be able to output all the PCC Templates from MDHT.

In that way, a C-CDA document could become the US National Extensions to IHE. Perhaps we could even ballot the intermediate result as a DSTU through HL7, as an international realm version of C-CDA templates.

Fortunately, this work won't begin until (I hope), I've made more substantial progress finishing up Query Health and ABBI. Otherwise, you might as well just shoot me now.

I expect some will want me to use Trifolia for this effort. But there's a lot more I could do with MDHT, and the benefit is that MDHT is Open Source, whereas Trifolia isn't. And if I do it right, and what comes out of MDHT goes back in, I've already built a reference implementation and testing tool. I cannot do that with Trifolia today.

Keith

There are two challenges:

- C-CDA made some non-backwards compatible changes in the new templates, necessitating a transition strategy.

- C-CDA is focused on US requirements, and the IHE PCC-TF is used Internationally. There are some US-specific vocabularies that simply won't fly internationally. Even SNOMED-CT will be challenging for some international users.

My hope is to use MDHT to capture the existing PCC Technical Framework rules (actually, to verify the transcriptions already available here), compare the rules to C-CDA, and apply some logic within Eclipse across the models to create new templates that move us towards C-CDA. When the results of the logic produce templates that are identical, we'd just adopt the C-CDA templates. When they didn't, we'd create new versions of IHE templates that adopted as many of the C-CDA constraints as possible.

With luck, I'd be able to output all the PCC Templates from MDHT.

In that way, a C-CDA document could become the US National Extensions to IHE. Perhaps we could even ballot the intermediate result as a DSTU through HL7, as an international realm version of C-CDA templates.

Fortunately, this work won't begin until (I hope), I've made more substantial progress finishing up Query Health and ABBI. Otherwise, you might as well just shoot me now.

I expect some will want me to use Trifolia for this effort. But there's a lot more I could do with MDHT, and the benefit is that MDHT is Open Source, whereas Trifolia isn't. And if I do it right, and what comes out of MDHT goes back in, I've already built a reference implementation and testing tool. I cannot do that with Trifolia today.

Keith

Monday, October 29, 2012

A Four-legged OAuth for ABBI

I've been thinking about how to address the million registration problem that I identified last week (actually, it was Josh Mandel who first pointed it out). I think I have a solution to the problem, and it revolves around the way the the consumer key is exchanged.

In Twitter, the same client application on different consumers’

systems uses the same key and secret, to connect to a singular data holder

(twitter.com). In ABBI, an application

will want to use the same key, yet different secrets on for each different data

holder it connects to. The reason to use

the same key is so that the client application can be consistently identified

in the same way across multiple data holders.

It also provides a way to avoid collisions between client applications. The reason to use a different secret with each data holder is to ensure that no data holder's secret can be used with another data holder. This prevents someone from pretending to be a data holder in order to obtain all of an application's secrets.

The consumer key I propose to use is a URL identifying the

application’s web page. It’s not

unreasonable to assume that a commercial application will have its own web site,

and while a little bit more challenging for the typical hacker, not a huge

hurdle. In fact, I run a secure web site for

free. It’s also not too hard to believe that

someone would willingly host pages for “garage-apps” (things a developer like

me would use to manage their own data).

The reason to use a different secret is so that the secret

shared between a client application and a data holder can only be used with

that data holder. This prevents a rogue from

creating a system pretending to be a data holder in order to gain access to a client

application’s secret. Surely they could

obtain access to a secret, but it would do them no good with any other data

holder, because the client application would just use a different secret for

the other data holder.

It is important that the secrets used by the client

application with the same data holder always be the same regardless of which

consumer system is accessing that data holder, because most (if not all) OAuth implementations

expect each key to be associated with one secret. Centralizing control of secrets isn’t

something that you can expect the client application itself to manager. In fact, a new secret would have to be

generated for every new data holder that appears in the ecosystem. This is another reason why the client

application needs to be associated with a web site, because that becomes the single

point of secret distribution for that client application.

Here is the workflow I’m presuming:

1)

Client Application MyABBI is installed on my personal

device.

2)

During Device Configuration, the application is

pointed to MyDataHolder.org as being one of the sources of data it needs to

query.

3)

Client Application MyABBI connects to its home

web-server (MyAbbiApps.com/MyABBI), and asks for the secret key to use with

MyDataHolder.org, as it has never connected to that Data Holder before. We don’t need to say much about how the

client application communicates to the web site, nor do we need to say how the

client application authenticates itself to that site, since both are under

control of the client application developer.

However, we do need to say that the communication must occur over a

secure channel, and that the Client Application must authenticate itself. In fact, the client application could use the

first step of the token request workflow to authenticate itself.

4)

The Client Application Web Site for MyABBI

responds with a secret key to MyABBI on my personal device. If the client application used the first step

of the token request workflow, the response could be the same as how a server

responds with a request token. During

this stage, one of two things happens:

a) https://MyAbbiApps.com/ABBI has never encountered https://MyDataHolder.org before. In this case, the site makes a determination whether to trust MyDataHolder.org or not. If it chooses to trust MyDataHolder.org, The website behind MyABBIAps.com/ABBI creates and stores a new secret associated with that data holder.b) https://MyAbbiApps.com/ABBI has encountered https://MyDataHolder.org. If it is trusted, the web site returns the secret associated with that data holder, and it isn’t trusted it returns some sort error response.

5)

Having obtained a consumer_secret, the MyABBI

can now begin the Authorization Workflow with https://MyDataHolder.org. The first step is for the client application

to obtain a request token from the Data Holder.

6)

About half way through the process of trying to verify

the request just made in the previous step, the https://MyDataHolder.org will

now need to obtain the client secret associated with client application. If it already has that information, it could

just reuse what it knows, since it usually will not have changed. But if it

doesn’t it needs to follow these steps:

a) Identify itself as https://MyDataHolder.org to https://MyAbbiApps.com/ABBI, and indicate that it is requesting the secret for the client application that identified itself to the Data Holder as being supported by that site.b) Reject the OAuth Request Token request with an error code.

7)

The https://MyABBIAps.com/ABBI website will

accept a request for a secret from a Data Holder. If the data holder trusts the client

application’s website certificate (at https://MyDataHolder.org), communication

continues, otherwise it stops at this point, and the Authorization workflow

fails.

8)

That request must contain the base URL for the

Data Holder’s ABBI API (e.g., https://MyDataHolder.org/ABBI/api) and also

contains a nonce associated with this request for the client application’s key.

9)

The Client Application’s website (https://MyAbbiApps.com/ABBI)

will send a request the “Secret Exchange” end-point for the Data Holder (https://MyDataHolder.org). That request will contain: The sending

website URL (https://MyAbbiApps.com/ABBI), the nonce given in step 8, and the client

applications secret needed to access resources.

10)

The Data Holder will store the client secret

associated with the client key if it recognizes the nonce, and return 200

OK. If it doesn’t recognize the nonce,

it sends back an error message.

11)

If https://MyAbbiApps.com

gets back an error message, indicating that MyDataHolder.org didn’t recognize

the nonce associated with the client secret, it should invalidate that secret.

12)

The client application will restart the

Authorization workflow with the data holder, and this time, it will succeed.

There are a couple of key points here that I’d like to

explain further. I’m assuming TLS

without client authentication, because that is just about how every consumer

facing web site works (even those with patient portals). Making either the data holder or the client

application’s web site use TLS with client authentication would simplify the

steps here, and enable them to establish mutual trust. However, it would also make MyDataHolder.org

have to provide a public certificate, which the web-site developer may not have

access to, or vice-versa. I’d be very

challenged to get access to the certificate securing https://abbi-motorcycleguy.rhcloud.com.

It’s not mine. It belongs to https://rhcloud.com and so I can’t use it to verify

a TLS connection as a client endpoint, it can only be used to verify the

identity of the web server.

Without mutual authentication, the challenge in step 7 is when

MyAbbiApp.com gets a request purporting to be from MyDataHolder.org asking for

the secret associated with MyAbbiApp.com.

It has no way to determine that the requester is indeed MyDataHolder.org

without without client authentication.

To resolve that problem, step 8 and 9 come into play. Step 8 ensures that there is a way to synchronize

the request with the response that comes asynchronously in step 9.

In Step 9, DataHolder.org finally gets the secret it asked

for at step 7.

Note that we haven’t delayed the authorization request in

step 6, we simply rejected it, expecting the client to retry in step 12. It is certainly feasible to delay the authorization

step, but synchronizing these two separate threads of activity can be

challenging, and isn’t necessary. It’s just

necessary to get the client application to try again. With a sufficient time delay (e.g., one

minute), the client application will be able to complete the authentication workflow

just fine most of the time.

Step 10 and 11 ensures that data holders respond

appropriately to requests to send them a secret, and the client web site revokes

a secret it handed out that wasn’t accepted by a Data Holder.

The applications running behind MyAbbiApps.com and MyDataHolder.org

need establish no mutual trust relationship initially. It is only when they become aware of each

other that each can separately make a trust determination about the other based

upon policies. MyDataHolder.org could

refuse to trust a site whose certificate it didn’t like. Similarly, MyAbbiApps.com could refuse to

trust a Data Holder it didn’t like. It’s

completely up to them to configure the policy.

In fact, the trust relationships need not even be based on the

certifications, but could be based on the site URL. Blacklists could be used by either site as

well to reject requests.

Now that I’ve worked out the Authorization Workflow, what

about the normal API Authorization case?

It shouldn’t be necessary for the data holder to get the consumer secret

again for MyAbbiApps.com/ABBI, but if it were, it could simply start at step 6

with the request to obtain the secret.

In this way, applications don’t even need to maintain any sort of

persistent record of the client secret, because they have a way to obtain it

again later.

What I’ve just described is what I call 4-legged OAuth. The three actors in 3-legged OAuth are

1.

Consumer (End User)

2.

Client Application

3.

Server (Data Holder)

We’ve just added the Client Registrar.

Friday, October 26, 2012

CMS Posts 2014 MeaningfulUse Clinical Quality Measures, Electronic Specifications and Resources

CMS Posts 2014 Meaningful Use Clinical Quality Measures, Electronic Specifications and Resources

Beginning in 2014, the reporting of clinical quality measures (CQMs) will change for all providers. Electronic Health Record (EHR) technology that has been certified to the 2014 standards and capabilities will contain new CQM criteria. Eligible professionals (EPs), eligible hospitals, and critical access hospitals (CAHs) will report using their respective new 2014 criteria regardless of whether they are participating in Stage 1 or Stage 2 of the Medicare and Medicaid EHR Incentive Programs.

The final 2014 CQMs for eligible professionals and eligible hospitals are now available, as well as the specifications for electronic reporting and access to the related data elements and value sets. The value sets define clinical concepts, providing a list of numerical values (e.g. code values from ICD-9, SNOMED CT, etc.) and individual descriptions for the clinical concepts (e.g. diabetes, clinical visit) used to define the quality measures. Each clinical concept referenced in a clinical quality measure is represented by a set of code values (a value set) it may take on.

e-Specifications

The value sets of the electronic specifications are the code values that the data elements (the clinical concepts) of the CQMs in your EHR may take on. These sets allow you to compute and export the measure results uniformly, and to report them in attestation. Vendor, EPs, and eligible hospitals may access the value sets via the National Library of Medicine Value Set Authority Center (VSAC). The value sets are available to view and download via several file formats an APIs (application programming interface). Additionally, the Data Element Catalog (DEC) which identifies data element names required for capture in EHR technology under the ONC certification program are also available via the VSAC.

CMS has also posted Human Readable files of the e-specifications for eligible professionals and eligible hospitals that explain the measure, measure sponsor, measure rationale, and how the measure is calculated.

New 2014 CQM Resources

To help providers and vendors navigate the new CQMs, the following resources are available:

· United States Healthcare Knowledge Database (USHIK) AHRQ's website with both MU1 and MU2 clinical quality measures and other HIT resources. This site provides a number of formats for viewing, downloading, and comparing versions of CQMs and their value sets.

· Implementation Guide to the 2014 CQMs — Guidance for understanding and using the final CQMs, the Human Readable files, and the e-specifications.

· Release Notes — A comparison of the previous Stage 1 CQMs that are in effect through 2013, with the 2014 CQMs.

· CQM Tipsheet — An overview of the CQMs, including the number of measures EPs and eligible hospitals must report, CQM reporting options, and reporting and submission periods.

EHR CQM Certification

Certification of EHR technologies requires that EHR software products and EHR modules be tested, as applicable, for their capabilities to accurately capture, calculate and report the Clinical Quality Measure results. ONC has commissioned the development of the open source Cypress certification tool. Cypress has already encoded the measure logic required in a software implementation. The process for submitting the Cypress tool for official approval to be used in the ONC Certification Program is currently under way. Visit www.healthit.gov/cypress for more information.

|

Thousand of Providers and Apps for ABBI

The vision for ABBI is to enable thousands of providers to allow patients to automatically download data, possibly using thousands of various health applications. The challenge this creates is that for the eco-system to work, we need to ensure that 1000 apps X 1000 providers doesn't equal 1,000,000 application registrations for every app to work with every provider. I was reminded of this by a comment made by Josh Mandel yesterday on another of my ABBI posts.

I've been prototyping my efforts using OAuth 1.0, and have been following Twitter's pattern. With Twitter, you have to register your app, and get a client key and secret that your app will use later in the Authorization Workflow. But providers don't want to be in the credentialing business at all, and many would love to off-load that responsibility even for patients to others, so why would they now want to credential applications as well.

In the case of Twitter, it is the singular provider of the Twitter API, yet what we want for ABBI is to have numerous providers of the ABBI API. We don't want to have each of those applications to have to register with all of the possible data holders, because then the applications would have to have foreknowledge of all data holders. In fact, based on the consent model, there should not need to be any sort of business relationship between the application developers and the data holders. But we do want to make sure (from the patient's perspective) that these applications aren't just some rogue actor trying to obtain access to patient information.

I looked to see what the Rhex Project had done, considering they were implementing OAuth 2.0, but no luck. Page 11 of the Rhex OAuth 2.0 Profile (.doc) states:

There are a couple of ways to avoid registration.

I'd like to avoid dropping the oauth_consumer_key if possible. There's simply no way to convey the client application identity if you do that.

Key features of the client key and secret that need to be preserved:

I've been prototyping my efforts using OAuth 1.0, and have been following Twitter's pattern. With Twitter, you have to register your app, and get a client key and secret that your app will use later in the Authorization Workflow. But providers don't want to be in the credentialing business at all, and many would love to off-load that responsibility even for patients to others, so why would they now want to credential applications as well.

In the case of Twitter, it is the singular provider of the Twitter API, yet what we want for ABBI is to have numerous providers of the ABBI API. We don't want to have each of those applications to have to register with all of the possible data holders, because then the applications would have to have foreknowledge of all data holders. In fact, based on the consent model, there should not need to be any sort of business relationship between the application developers and the data holders. But we do want to make sure (from the patient's perspective) that these applications aren't just some rogue actor trying to obtain access to patient information.

I looked to see what the Rhex Project had done, considering they were implementing OAuth 2.0, but no luck. Page 11 of the Rhex OAuth 2.0 Profile (.doc) states:

Confidential Clients register a JSON Web Key (JWK) with a trusted Authorization Server prior to conducting health exchange transactions.So even they require a registration step.

There are a couple of ways to avoid registration.

Don't Use oauth_consumer_key

OAuth 1.0 doesn't require the oauth_consumer_key to be present in the Authorization workflow or in API calls, nor is it required to have a value. The whole idea that the client application has to register could be ignored. But, one advantage that oauth_consumer_key and oauth_consumer_secret support is the idea that activities performed by applications on behalf of a user can be traced back to a specific application. This is rather beneficial. If my data was downloaded, I'd really like to know which app did it when I check the logs.I'd like to avoid dropping the oauth_consumer_key if possible. There's simply no way to convey the client application identity if you do that.

Negotiate oauth_consumer_key without a Registration

OAuth 1.0 leaves completely unspecified the means by which the consumer_key and consumer_secret values are exchanged with the client application. This is by design. With Twitter, there's a developer's API page you use, and you request keys via the web. But there could be any number of other mechanisms by which client keys and secrets are exchanged, some of which could be done without a registration step.Key features of the client key and secret that need to be preserved:

- The key needs to uniquely identify the client application, and be distinct from any other client application.

- The secret needs to be something that that only the server (data holder) and the client application could have knowledge of.

I have a solution in mind that involves consumer key and secret exchanges with the client application's web-site. There are several challenges that have to be surmounted in order for this to work, but I'm going to spend some time thinking on this.

Wednesday, October 24, 2012

ABBI

I've written more than a score of posts on ABBI, and it's beginning to get difficult to remember the right keywords to find each one when I want to reference it. So it's well past time to write the TOC page for the ABBI Project. As I write more about ABBI, I'll update this page (which you can find in My Favorites).

You can find further material on ABBI by looking at my prototype, the source, or my kids' info-mercial.

You can find further material on ABBI by looking at my prototype, the source, or my kids' info-mercial.

- Not So Secret White House Meeting with Patients

This is where it all started. - Automate the Blue Button

Mostly a reprint of the ONC Announcement, but also where my first cut at a proposal for PULL started from (IHE MHD + OAuth). - "Ask for It" The Director's Cut

My daughters' three minute info-mercial for ABBI. - Automate the Blue Button PULL Proposal

My first cut at a proposal for the PULL API. - The Magical Little Blue Button

In which I explain how the new Blue Button supports the requirements for View, Download and Transmit of Meaningful Use. - What ABBI can for for Healthcare Cost Transparency

If there was ever a post that I could say really had an impact on healthcare cost, this might be it someday. And some folks on the payer side seem to be taking this one pretty seriously. The idea is to allow patients to download their EOB data electronically in a machine readable format. - Crowd Sourcing a Keynote

Half summary of the session Adrian Gropper and I did at Health Camp, and half input to Dave deBronkart's keynote at Medicine 2.0 (where Abby again wows the crowd). - Fall is Coding Season

Trials and tribulations of maintaining a development environment, which is actually pretty revealing about my Architecture for the ABBI prototype. - ABBI's First Data Holder Connection to IHE XDS via OHT

My first bit of working code for the prototype. - Provisioning an Application to automatically find ABBI's BlueButton

Finding a web-sites Blue Button interface should be as easy as finding your belly-button. This proposes a way to do that. - A Bake Off

I'm comparing IHE MHD, FHIR's XdsEntry, and what I composed for ABBI. You can too at the link in the explanation for the next post. - I iz in UR Cloud. It iz mai Sandbox

In which I launch the code I've been playing with into the cloud. Play with my prototype here.

CDA

Some of my posts on ABBI are related to CDA.

- Illegitimi Non Carborundum

A rant and recant in which I complain about attacks which I take rather personally. - Forwards on ABBI

Mostly more ranting, but also explaining why I like this project so much. - Why Not Both? The Genius of the AND, 2012 Edition

In which I move towards a content negotiation strategy for ABBI. - Usability, CCDA Documents and ABBI

Give me my damn data. Make it pretty later. Because the key word in usability is use, not view. - Styling CDA Based on Entry Content

In which I describe a technique that allows you to style narrative based on the data that reference it.

OAuth

A number of my posts deal with using OAuth. I've gathered these together here in this section.

- ABBI Security

Starting to delve into OAuth, and container managed security. This becomes important later. - An OAuth Provider for ABBI

Just a little bit of architecture on my FIRST OAuth Provider that I developed for ABBI. It certainly works, but there's something better in the works... - An OAuth Provider for Java based on Scribe

Having found and invested myself in Scribe, and then discovering it implemented only the client-side of OAuth, I now had to go pick something else. Or did I? This post explains how I enhanced Scribe to deal with the Provider side of OAuth. But wait... - OAuth Enabling a Web Application

In which I refactor my OAuth implementation to make it easier to retrofit existing web apps.

Styling CDA Based on Entry Content

I've long advocated that CDA Entries be linked back to the narrative text that they are associated with. There are some very good reasons for doing this, and I discovered a new one yesterday. What that allows you to do is set display formatting based on what the narrative content means.

Suppose you had the following content in your CDA document:

<table border="1">

<thead>

<tr><th>Severity</th><th>Problem</th><th>Date</th><th>Comments</th></tr>

</thead>

<tbody><tr>

<td><content ID="severity-1">Severe</content></td>

<td><content ID="problem-1">Ankle Sprain</content></td>

<td>3/28/2005</td>

<td><content ID="comment-1">Slipped on ice and fell</content></td>

</tr></tbody>

</table>

Note the <content> elements and the ID attributes.

Assume you had an entry with the following content (I've removed all but the templateId and element container for the reference in the following for brevity):

<entry>

<observation classCode="COND" moodCode="EVN">

<templateId root="1.3.6.1.4.1.19376.1.5.3.1.4.5"/>

...<value code="44465007" codeSystem="2.16.840.1.113883.6.96"

codeSystemName="SNOMED CT" xsi:type="CV">

<originalText><reference value="#problem-1"/></originalText>

</value>...

<entryRelationship inversionInd="true" typeCode="SUBJ">

<observation classCode="OBS" moodCode="EVN">

<templateId root="1.3.6.1.4.1.19376.1.5.3.1.4.1"/>

...<value code="H" codeSystem="2.16.840.1.113883.5.1063"

codeSystemName="ObservationValue" displayName="High" xsi:type="CD">

<originalText><reference value="#severity-1"/></originalText>

</value>...

</observation>

</entryRelationship>

<entryRelationship inversionInd="true" typeCode="SUBJ">

<observation classCode="OBS" moodCode="EVN">

<templateId root="1.3.6.1.4.1.19376.1.5.3.1.4.2"/>

...<text><reference value="#comment-1"/></text>...

</observation>

</entryRelationship>

</observation>

</entry>

Note how each entry template includes a reference pointing back to the ID. Let's classify each of these references:

#problem-1: References the name of the problem. Suppose in this case, we'd like these to be in bold and small-caps.

#severity-1: References the severity of theproblem. We don't want to do anything special with these.

#comment-1: References the comment on the problem. Suppose here we want this to be italicized, and furthermore, would like a mouse-over to display the author name.

This is a modest extension of the Stupid Mouse-Over trick that I teach when I talk about how to render CDA documents. You'll need a template for each special style you want to apply, and you'll also need to apply a template to the ID attribute that you invoke for each CDA narrative element you process.

Here's the template for the ID attribute.

<xsl:template match="@ID">

<xsl:attribute name="ID">

<xsl:value-of select="."/>

</xsl:attribute>

<xsl:variable name="theID" select="concat('#',.)"/>

<xsl:variable name="theRef" select="//cda:reference[@value=$theID]/../.."/>

<xsl:apply-templates select="$theRef" mode="style">

<xsl:with-param name="ID" select="."/>

</xsl:apply-templates>

</xsl:template>

This template copies the ID to element you just created (e.g., <p>, <li>, <td> et cetera, using this mapping). It locates any references to that ID in entries anywhere within the CDA document, and calls a template to set the class attribute on the output element. This template is invoked either on the value or code element (e.g., where originalText would appear), or on the entry element itself (when the reference appears in act/text).

The next two examples show how to create templates that would set the style to use for the problem name and severity.

<xsl:template match="cda:value[

parent::cda:observation/cda:templateId/

@root='1.3.6.1.4.1.19376.1.5.3.1.4.5']" mode="style">

<xsl:attribute name="class">problem-name</xsl:attribute>

</xsl:template>

<xsl:template match="cda:value[

parent::cda:observation/cda:templateId/

@root='1.3.6.1.4.1.19376.1.5.3.1.4.1']" mode="style">

<xsl:attribute name="class">severity</xsl:attribute>

</xsl:template>

You may recall that I said we don't want to do anything special for severity. That's still true, but read on:

Here's the template for the comment.

<xsl:template match="cda:observation[

cda:templateId/@root='1.3.6.1.4.1.19376.1.5.3.1.4.2']" mode="style">

<xsl:param name="ID"/>

<xsl:attribute name="class">comment</xsl:attribute>

<xsl:attribute name="title"><xsl:apply-templates

select="ancestor-or-self::*[cda:author][1]/

cda:author[descendant::cda:assignedPerson][1]" mode="title"/>

</xsl:attribute>

</xsl:template>

I said we wanted a mouse-over to display the name of the author for the comment. To do that, we'll assign a title attribute to the wrapping tag, and fill it in with the author name. To do that, we have to find the author. The select attribute of the apply-templates requires a little bit of explanation. It locates any element ancestor where we have the reference that has an author. These will be ordered in reverse document order. The first of these will be the closest ancestor that assigns one or more authors to the entry. From there, we select the authors who are people rather than devices (this is what descendant::cda:assignedPerson] does), and choose the first one of those (the [1]) to display in the title.

The final template converts a person name into a string appropriate for display in a title. It just concatenates the name parts with a space delimiter after each one (a purist would strip off the last space, but for this demonstration it doesn't matter).

<xsl:template match="cda:author" mode="title">

<xsl:for-each select="./cda:assignedAuthor/cda:assignedPerson/cda:name/cda:*">

<xsl:value-of select="."/>

<xsl:text> </xsl:text>

</xsl:for-each>

</xsl:template>

Finally, somewhere at the top of the XSLT stylesheet, you need to output a <style> element that contains the following:

<style>

...

.problem-name { font-weight: bold; font-variant: small-caps; }

.severity { }

.comment { font-style: italic; }

</style>

While we assign a class to severity in the generated HTML, the style associated with that class does nothing. This allows me to change my mind later about how severity would be represented in the output.

Using the sample file that I started with (one I used for CRS Release 1), the result looks something like this. Note that while I started with something old, the same techniques can be applied to CCD, C-CDA or any other set of templates using the CDA standard.

Suppose you had the following content in your CDA document:

<table border="1">

<thead>

<tr><th>Severity</th><th>Problem</th><th>Date</th><th>Comments</th></tr>

</thead>

<tbody><tr>

<td><content ID="severity-1">Severe</content></td>

<td><content ID="problem-1">Ankle Sprain</content></td>

<td>3/28/2005</td>

<td><content ID="comment-1">Slipped on ice and fell</content></td>

</tr></tbody>

</table>

Note the <content> elements and the ID attributes.

Assume you had an entry with the following content (I've removed all but the templateId and element container for the reference in the following for brevity):

<entry>

<observation classCode="COND" moodCode="EVN">

<templateId root="1.3.6.1.4.1.19376.1.5.3.1.4.5"/>

...<value code="44465007" codeSystem="2.16.840.1.113883.6.96"

codeSystemName="SNOMED CT" xsi:type="CV">

<originalText><reference value="#problem-1"/></originalText>

</value>...

<entryRelationship inversionInd="true" typeCode="SUBJ">

<observation classCode="OBS" moodCode="EVN">

<templateId root="1.3.6.1.4.1.19376.1.5.3.1.4.1"/>

...<value code="H" codeSystem="2.16.840.1.113883.5.1063"

codeSystemName="ObservationValue" displayName="High" xsi:type="CD">

<originalText><reference value="#severity-1"/></originalText>

</value>...

</observation>

</entryRelationship>

<entryRelationship inversionInd="true" typeCode="SUBJ">

<observation classCode="OBS" moodCode="EVN">

<templateId root="1.3.6.1.4.1.19376.1.5.3.1.4.2"/>

...<text><reference value="#comment-1"/></text>...

</observation>

</entryRelationship>

</observation>

</entry>

Note how each entry template includes a reference pointing back to the ID. Let's classify each of these references:

#problem-1: References the name of the problem. Suppose in this case, we'd like these to be in bold and small-caps.

#severity-1: References the severity of theproblem. We don't want to do anything special with these.

#comment-1: References the comment on the problem. Suppose here we want this to be italicized, and furthermore, would like a mouse-over to display the author name.

This is a modest extension of the Stupid Mouse-Over trick that I teach when I talk about how to render CDA documents. You'll need a template for each special style you want to apply, and you'll also need to apply a template to the ID attribute that you invoke for each CDA narrative element you process.

Here's the template for the ID attribute.

<xsl:template match="@ID">

<xsl:attribute name="ID">

<xsl:value-of select="."/>

</xsl:attribute>

<xsl:variable name="theID" select="concat('#',.)"/>

<xsl:variable name="theRef" select="//cda:reference[@value=$theID]/../.."/>

<xsl:apply-templates select="$theRef" mode="style">

<xsl:with-param name="ID" select="."/>

</xsl:apply-templates>

</xsl:template>

This template copies the ID to element you just created (e.g., <p>, <li>, <td> et cetera, using this mapping). It locates any references to that ID in entries anywhere within the CDA document, and calls a template to set the class attribute on the output element. This template is invoked either on the value or code element (e.g., where originalText would appear), or on the entry element itself (when the reference appears in act/text).

The next two examples show how to create templates that would set the style to use for the problem name and severity.

<xsl:template match="cda:value[

parent::cda:observation/cda:templateId/

@root='1.3.6.1.4.1.19376.1.5.3.1.4.5']" mode="style">

<xsl:attribute name="class">problem-name</xsl:attribute>

</xsl:template>

<xsl:template match="cda:value[

parent::cda:observation/cda:templateId/

@root='1.3.6.1.4.1.19376.1.5.3.1.4.1']" mode="style">

<xsl:attribute name="class">severity</xsl:attribute>

</xsl:template>

You may recall that I said we don't want to do anything special for severity. That's still true, but read on:

Here's the template for the comment.

<xsl:template match="cda:observation[

cda:templateId/@root='1.3.6.1.4.1.19376.1.5.3.1.4.2']" mode="style">

<xsl:param name="ID"/>

<xsl:attribute name="class">comment</xsl:attribute>

<xsl:attribute name="title"><xsl:apply-templates

select="ancestor-or-self::*[cda:author][1]/

cda:author[descendant::cda:assignedPerson][1]" mode="title"/>

</xsl:attribute>

</xsl:template>

I said we wanted a mouse-over to display the name of the author for the comment. To do that, we'll assign a title attribute to the wrapping tag, and fill it in with the author name. To do that, we have to find the author. The select attribute of the apply-templates requires a little bit of explanation. It locates any element ancestor where we have the reference that has an author. These will be ordered in reverse document order. The first of these will be the closest ancestor that assigns one or more authors to the entry. From there, we select the authors who are people rather than devices (this is what descendant::cda:assignedPerson] does), and choose the first one of those (the [1]) to display in the title.

The final template converts a person name into a string appropriate for display in a title. It just concatenates the name parts with a space delimiter after each one (a purist would strip off the last space, but for this demonstration it doesn't matter).

<xsl:template match="cda:author" mode="title">

<xsl:for-each select="./cda:assignedAuthor/cda:assignedPerson/cda:name/cda:*">

<xsl:value-of select="."/>

<xsl:text> </xsl:text>

</xsl:for-each>

</xsl:template>

Finally, somewhere at the top of the XSLT stylesheet, you need to output a <style> element that contains the following:

<style>

...

.problem-name { font-weight: bold; font-variant: small-caps; }

.severity { }

.comment { font-style: italic; }

</style>

While we assign a class to severity in the generated HTML, the style associated with that class does nothing. This allows me to change my mind later about how severity would be represented in the output.

Using the sample file that I started with (one I used for CRS Release 1), the result looks something like this. Note that while I started with something old, the same techniques can be applied to CCD, C-CDA or any other set of templates using the CDA standard.

Good Health Clinic Care Record Summary

Conditions

| Severity | Problem | Date | Comments |

|---|---|---|---|

| Severe | Ankle Sprain | 3/28/2005 | Slipped on ice and fell |

ONC Challenge

ONC yesterday issued a design challenge for Blue Button. It should be fairly straight-foward to apply this technique in a CDA Stylesheet that implements one of the chosen designs. What this technique does support is applying specific styles to narrative content that already appears and is linked to entries. What it won't do is generate narrative from the entries. For that, you'd need a different kind of style sheet, and that would need to be used by producers of CDA documents, rather than consumers of them. This technique can be used by either producers or consumers.

Tuesday, October 23, 2012

OAuth Enabling a Web Application

While most of what I post is of interest to just a Healthcare crowd, this particular post is of general interest, because the code I've put together should work with any kind of web site.

One of the discussions in the ABBI pull workgroup is about how to secure access to the API. We'd discussed using OAuth, but there are some concerns that it is too difficult to implement on an existing website. Having developed both client and server side RFC 5849 implementations (based on OAuth 1.0a), I decided to see what it would actually take. It turns out to be about 2000 lines of code and about a week of my time. Having done so, it should only take an implementer using the tools I've produced a day.

To do this exercise, I needed to refactor my OAuth Provider implementation. There are two parts of OAuth. The first part is the Authorization Workflow, which involves a sequence of exchanges to obtain a credential used to access OAuth protected APIs. The second part is Request Validation Process used to verify authorization credentials used to access various pages. My original implementation was complicated because various parts of the Authorization Workflow also go through Request Validation Process.

What follows below is a description of the code I put together that allows you to OAuth enable a web site. It is a fairly long post. If you want to cut to the chase, I won't be offended.

On a side note, although everything done here is Java based, the same kind of thing could be done for other kinds of sites. You should also be able to use the OAuthFilter and OAuthServlet in conjunction with IIS driven web sites that run inside of a Tomcat web server (or other JSP 2.3/Servlet 1.2 compliant container).

Authorization Workflow and Request Validation

The image below shows both the Authorization Workflow and use of OAuth Protected API calls. As you can see, validating that a request is authorized by an OAuth token appears in both places.

What I wound up doing in my refactoring was:

- Creating an Authentication Filter to Validate Requests. The OAuthFilter validates a request. On failure, it returns a 401 Unauthorized error to the User Agent. On success, it passes a wrapped request onto the web application that provides access to the authenticated user (the application), and the authorizing user, via the getUserPrincipal, getRemoteUser and isUserInRole API's of HttpServletRequestWrapper (and HttpServletRequest).

- Creating a Servlet that handles the Data Holder's side of the Authorization Workflow (the Data Holder is the Server in

- Creating a demonstration JSP Page to handle the "Authorization".

OAuthFilter

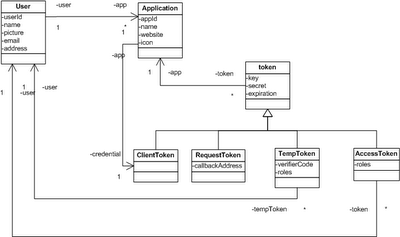

The OAuthFilter class is fairly simple. It relies on two pluggable components, an OAuthProvider and a Repository. The OAuthProvider class implements the verification and much of the Authorization Workflow. It delegates to the Repository to get access to tokens, users and applications. These objects are structured according to the model shown below (it was the lack of this model that led to the complexity of my original Provider implementation).

During initialization, it constructs instances of specified OAuthProvider and Repository classes from the Filter initialization parameters, using defaults if they haven't been specified.

The filter protects all API pages, the OAuthServlet, and the Authorization page. The first thing it does it put the OAuthProvider class into the Request context under the OAuth.Provider key. This is how the OAuthServlet and the Authorization page get the provider it needs to use to manage the Authorization Workflow.

Next it checks to see if this is a request to the Authorization page. If it is, it just forwards the request on to that page. If it isn't then the filter must validate the request. It extracts the OAuth parameters from the Authorization header, gets the tokens, and verifies that the signature is correctly computed. If the tokens aren't there, the authorization header is missing, or the signature is incorrect, it returns a 401 Unauthorized to the caller, with a WWW-Authenticate: OAuth ream="realm" response header to the caller.

If the request is valid, the an OAuthRequestWrapper is created that will return an OAuthPrincipal in response to the getUserPrincipal() HttpServletRequest API call.

OAuthUser

This class represents the means by which any application can get access to the name (actually the identifier) of the Application which is making request, and the name of the User on whose behalf the requests are being made. This principal represents not the User, but rather the Application, because it is the Application that is the actor doing things, not the user. OAuthUser has one method that allows access to the id of the user being "impersonated" for the requests the application is performing (getImpersonating()).

Any API call protected by the OAuthFilter can obtain a reference to the OAuthUser class as follows:

OAuthUser u = null;

Principal p = request.getUserPrincipal();

if (p instanceof OAuthUser)

u = (OAuthUser)p;

This is essential so that the API knows which user's data to return.

Repository

The repository provides access to Tokens (by their key), Users (by ID), and Applications (by ID), provides an API to construct tokens used in the Authentication Workflow, to remove used tokens, and to check and store nonce values. Most tokens don't need persistent storage in a database, but client (or consumer) and access tokens need long term persistence. For testing, I developed a repository that just stores all of these in memory. Users and Applications do need long term persistence, and so the MemoryRepository class is only a starting point.

Nonces only need to be stored for about 5 minutes or so (depending on the maximum time difference between an application request and response you want to support). I use two hash tables to deal with storing nonces. The first one is populated with all nonces created in a particular 5 minute period. The second one is populated with the nonces created in the prior period. To check whether a nonce was already used, it is looked up in both tables, but when saving a nonce that has been used, it is stored in the first table. Every five minutes I throw away the oldest table, and transfer the newer table as the older table, and create a new empty table. It's very quick, and means that I don't need to do much to manage nonces.

OAuthProvider

The OAuthProvider class supports a method to validate an HTTP Request, get tokens (from the repository) for each stage of the Authorization Workflow, check for prior authorization, authorize an application, and manage logging.

OAuthServlet

The OAuthServlet supports several steps of the Authorization Workflow. It exposes two endpoints in the web application context: /oauth/request and /oauth/access. The first endpoint is used by the client application to obtain a request token. The second endpoint is used by the client application to convert a temporary token into an access token, which can be used in subsequent API requests.

The Servlet obtains the OAuthProvider to use from the request context (stored under the OAuth.Provider key by the OAuthFilter). It checks to see if the request is for a request token or access token, and also determines whether the request being performed is appropriate to the role associated with the tokens used in the request. On failure here, the OAuthServlet returns a 404 Not Found error.

Otherwise, it performs the appropriate next step in the Authorization Workflow.

The page expects an authorization request containing a request token coming from the user agent (via a redirect from the data holder). When the page posts, it needs to include the roles that the application has been authorized to access on behalf of the authorizing user.

The Authorization page needs to be protected by container managed security. This is because the OAuthProvider used by this page associates the user identified by the Principal associated with the request by container managed security in the application. The ramification here is that Principal.getName() must be a unique identifier for each user.

Otherwise, it performs the appropriate next step in the Authorization Workflow.

Authorization Page

The Authorization page is what connects OAuth to the rest of the web application. This page has two halves. The first half deals with fulfilling the Authorization Workflow. The second half deals with displaying to the user what they can authorize for the application. These are really two independent pieces, where only the second part should be customized by web applications. I could refactor this just a bit more by placing some of this in the OAuthServlet, and having the Authorization page be configured so that the servlet could forward to it as needed.The page expects an authorization request containing a request token coming from the user agent (via a redirect from the data holder). When the page posts, it needs to include the roles that the application has been authorized to access on behalf of the authorizing user.

The Authorization page needs to be protected by container managed security. This is because the OAuthProvider used by this page associates the user identified by the Principal associated with the request by container managed security in the application. The ramification here is that Principal.getName() must be a unique identifier for each user.

Putting it All Together

OAuth enabling an application is pretty straightforward with the tools I've put together.

Here are the prerequisites:

- A JSP 2.3/Servlet 1.2 (or higher) enabled Web Container (my test Environment is Tomcat 5.0).

- A Web Application (existing site) that uses Container Managed Security to support login to your existing application.

And here are the steps to OAuth enable your application with these tools:

- Add the jar file containing the OAuthFilter and OAuthServlet to WEB-INF/lib in your application.

- Add about 50 lines to your applications web.xml to configure the OAuthFilter and OAuthServlet.

- Update the Authenticate.jsp page to support the roles needed by your application, and the user interface you want to present to users.

- Add the Authenticate.jsp page to the list of protected pages that require a login for your application.

- Create API pages that allow access to the services you want to expose.

- Ensure that your API pages are protected by the OAuthFilter.

All the code can be found here.

Next Steps

My next steps are to build a SQL Database backed Repository, and do a little more thorough delivery on this code base (including creating appropriate Jar and documentation files which you have to build yourself today). The DatabaseRepository will implement a bit more capabilities around users and applications, providing some of the attributes you saw in the class diagram at the beginning of this post.

Subscribe to:

Posts (Atom)